The 7 Levels of

AI Proficiency.

A measurable ladder from where your team is today to where the work needs them to be. Each level is defined by a human skill, not a technical one.

The white-collar factory

is closing.

The corporate org chart most companies still use was built by the Romans for moving information across an empire when human beings were the only intelligence available. AI just collapsed the cost of running it. The companies that restructure compound. The ones that bolt AI onto the old hierarchy do not.

“You are not crazy. You were just early.”

This is the foundational thesis behind everything LaunchReady builds: the framework, the assessment, the engagement, the books.

Read the manifesto

Most AI training teaches tools.

We measure people.

Most AI training teaches the buttons. Which prompt to type. Which model to pick. That is Level 2 thinking applied to a Level 7 problem.

The 7 Levels of AI Proficiency measures something different. The human capabilities that determine how effectively you direct AI. Not what AI can do. What you can do with it.

At the lower levels, technical skill counts. You learn to write better prompts, evaluate output, and manage context. At the top levels, the skills that count are entirely human: design thinking, systems integration, stakeholder navigation, and leadership.

The professionals who thrive in an AI-driven economy are not the most technical. They are the most human.

Created by Harrison Painter. LaunchReady.ai. 2026.

Seven levels.

One ladder.

Each level has its own challenge coin, its own human skill, and its own deep-dive page. Click any level to read the full breakdown.

You know AI exists and you have tried it. That alone puts you ahead of a surprising number of professionals. Right now you are typing in requests the way you would type into a search engine. The outputs feel hit-or-miss because they are. You do not yet know what good looks like, which makes it hard to see what you are missing.

What to learn nextYour prompts are vague, so your results are inconsistent. Learn to give AI clear, structured instructions: who the output is for, what you need, the format you want, and at least one constraint.

Deep dive →

You know how to give AI clear instructions. You include context, constraints, and format. Your results are noticeably better than most people's because your inputs are better. You are still treating AI like a vending machine, though. Put in a request, take out a result.

What to learn nextTreat AI like a thinking partner, not a vending machine. Ask follow-up questions. Push back on weak answers. The first answer is a draft, not a deliverable.

Deep dive →

You use AI as a thinking partner. You ask follow-up questions, stress-test ideas, and push AI to surface things you have not considered. Most people quit when AI gives a bad answer. You push back. That persistence is the skill that separates you from the majority.

What to learn nextThe longer you work with AI in a single conversation, the worse the output gets. Learning to manage context degradation, when to start fresh, how to carry context forward, is the difference between inconsistent results and reliable ones.

Deep dive →

You understand something most AI users never figure out. Conversations have a shelf life. You know when to start fresh, how to carry forward what counts, and why a clean session with good context beats a long session with a full memory. Your results are consistent because you manage the container, not just the content.

What to learn nextYour outputs are strong but based on general knowledge. AI does not know your business, your customers, or your data. The next level is designing how AI connects to real information.

Deep dive →

You are no longer just using AI for yourself. You are designing AI experiences for others. You think about what data AI needs, how workflows should be structured, and how to scope access responsibly. You direct what gets built, even if you are not writing the code yourself.

What to learn nextYou are rebuilding from scratch every time. The next level is documenting your best workflows so AI runs them consistently without you re-explaining everything.

Deep dive →

You have stopped starting from scratch. You document your best AI processes into reusable workflows with clear steps, defined inputs, and success criteria. Your results are consistent because the system is consistent. You are building infrastructure that compounds.

What to learn nextYour workflows run in isolation. The final level is connecting them into pipelines where the output of one step feeds the input of the next, and the whole system runs with minimal intervention.

Deep dive →

You chain multiple AI workflows into pipelines that run with minimal human intervention. You think in systems, not tasks. You design feedback loops so the system improves over time. The reason you are at the top is not technical skill. It is human skill. You change how people work. You build cultures that embrace AI. The job of the future is yours because you are the most human, not the most technical.

What comes nextYou are at the destination. From here, the work is depth and scale. More pipelines. Better feedback loops. Teaching others to build at this level. This is the job of the future.

Deep dive →

Three relationships.

One progression.

The levels are not a ladder you climb by learning more tools. They are a progression of human capability.

Your relationship with AI

Can you give it clear instructions? Can you think critically about what it produces? Can you manage the conversation without burning the context window?

Your relationship with systems

Can you design how AI connects to real data? Can you build experiences for other people, not just yourself?

Your relationship with organizations

Can you build reusable workflows? Can you lead change? Can you create the culture where AI and humans work together effectively?

Each level. One

human skill.

Most AI proficiency frameworks measure tools or output. The 7 Levels of AI Proficiency anchors each level in a specific human capability that determines how effectively you direct AI. The skills come from Daniel Goleman's emotional intelligence research, applied to the question of how humans and AI partner together.

Daniel Goleman's 1995 work on emotional intelligence changed how leadership researchers think about high performance. Five competencies (self-awareness, self-management, social awareness, relationship management, and inspirational leadership when applied at scale) consistently outpredict IQ and credentials when measuring effectiveness in roles that require directing other people. Three decades of follow-up research, replicated across industries and countries, has confirmed the original finding.

That same finding turns out to predict how effectively a human directs AI. AI behaves like a thinking partner, responsive to the quality of the conversation. The skills that determine the quality of that conversation are exactly the EQ skills Goleman named. Each of the seven levels in our framework names one Goleman skill as the load-bearing capability for that stage. Below Level 2 it is self-awareness. By Level 7 it is inspirational leadership at organizational scale. The technical skills do not disappear. They become a smaller and smaller fraction of what determines the result.

Self-Awareness

Cadet (AI Aware) and Ensign (Prompt Engineer). Goleman's first EQ skill: knowing what you do not know, and noticing whether your prompt actually says what you mean. The Dunning-Kruger problem at Level 1 is a self-awareness problem, not a tools problem. Most professionals at this level think they are further ahead than they are. The fix is not a better prompt template. The fix is the discipline of noticing your own assumptions before you type. By Level 2, self-awareness becomes structural: you cannot write a clear prompt if you do not first know clearly what you actually want.

Self-Management

Lieutenant (Critical Thinker). Frustration tolerance and emotional regulation. When AI gives a bad answer, most people blame the tool and quit. The professionals who reach Level 3 push back, ask follow-ups, and stress-test ideas. That persistence is a learned EQ skill, not a personality trait. Self-management is what separates the user who treats AI as a vending machine from the user who treats it as a thinking partner. Without it, the conversation ends at the first weak answer. With it, the conversation produces something neither human nor AI could have produced alone.

Social Awareness

Commander (Context Engineer). Reading the environment. Sensing when a conversation has degraded, when context is lost, when the AI partner is missing the thread. The same skill that reads a room reads a context window. Social awareness at this level is the ability to notice that the model is no longer responding to what you actually need, and to start a fresh session with a clean context document instead of pushing through a degrading thread. This is empathy applied to a non-human partner. The pattern recognition is identical to the pattern recognition in human conversation.

Relationship Management

Captain (Design Thinker) and Admiral (Systems Integrator). Coaching, influence, and stakeholder navigation. Designing AI for other people requires empathy for their workflow, their fears, and the politics of their organization. You cannot build for others without it. At Level 5, relationship management shows up as designing the experience: what does the user need to feel safe trusting an AI workflow? What does the data permission boundary need to look like? At Level 6, the same skill scales up: how do you get organizational buy-in for a workflow that touches three departments? How do you handle the team member whose role feels threatened by the change?

Inspirational Leadership

Mission Director (AI Orchestrator). Culture change and psychological safety at organizational scale. The Orchestrator is not the most technical operator in the building. The Orchestrator is the most human one. Trust, vision, and the ability to make people feel safe in uncertainty determine whether AI integration succeeds or stalls. The technical pieces, by Level 7, are well understood. What separates the organizations that compound from the organizations that bolt AI onto the old hierarchy is whether someone at the top can hold the human change steady while the tools move underneath.

The skills are progressive but not exclusive. Self-awareness counts at every level; it just becomes table stakes after Level 2. Inspirational leadership is the apex requirement, but every level below benefits from a leader who has it. The progression names which skill goes from helpful to load-bearing at each stage. The lower-level skills do not get retired. They become assumed.

This is the moat. When AI capability changes, and it changes monthly, frameworks anchored in tools or prompt patterns or productivity multiples decay along with the model generation that defined them. Goleman's EQ skills do not. Self-management remains a real human capability whether you direct GPT-5, Claude Opus 5, or whatever ships in 2027. Measurement that compounds across model generations is the only kind worth investing a workforce strategy in.

It also explains why the top of the ladder is human work, not technical work. Every step up the 7 Levels asks more of the human side and less of the tooling side. By Level 7 the technical pieces are well understood. The work that remains is leadership, trust, and culture. The job of the future is not the most technical operator. It is the most human one.

How we mapped each Goleman skill to a level. The mapping is not arbitrary. It came from three years of corporate AI workshops, structured interviews with professionals at every stage of AI adoption, and a literature review of the failure modes that recur in AI deployment. The pattern that emerged was specific: at each stage of progression, one Goleman EQ skill consistently determined whether a professional moved up or stalled. Below Level 2, the stallers were the people who could not see their own assumptions. They wrote prompts that reflected what they thought they wanted, not what they actually needed, and they could not see the difference. Self-awareness was the unlock.

At Level 3, the stallers were the people who took a bad AI answer at face value and concluded the tool was not useful. They lacked the frustration tolerance to push back, ask follow-ups, and stress-test the response. Self-management was the unlock. At Level 4, the stallers were the people who could not read when the conversation had degraded. They kept pushing through 50-message threads, getting worse output, and blaming the model. Social awareness, the same skill that lets you read when a meeting has gone sideways, was the unlock.

At Levels 5 and 6, the stallers were the people who could build for themselves but could not build for others. The specific failure mode was empathy: they could not anticipate what other team members would need to feel safe trusting an AI workflow, and so adoption stalled. Relationship management, the same Goleman skill that determines effective coaching and influence, was the unlock. At Level 7, the stallers were the people who had built strong personal practice but could not get organizational change to stick. The failure mode was vision and trust at scale. Inspirational leadership, Goleman's apex skill, was the unlock.

What we did not do, and what most consultancies in this category do, was retrofit a familiar competency model onto AI proficiency for marketing convenience. Goleman's EQ skills did not become the answer because they sounded plausible. They became the answer because they kept showing up in the data. Three years of workshop observation; structured interviews with roughly 200 professionals across roles and industries; cross-reference against the failure modes documented in academic AI adoption literature (Davis 1989's Technology Acceptance Model, Venkatesh 2003's UTAUT model, Rogers' Diffusion of Innovations). When the same Goleman skill kept appearing as the load-bearing capability at the same stage, we stopped guessing and named the mapping.

This methodology is the answer to the question every CEO asks when they first see the framework: "Is this just an opinion?" The honest answer is that the structure is empirical (where the levels split) and the labels are interpretive (which Goleman skill best names what is happening at each split). The framework will be revised as the data continues to come in from the assessment, the engagement cohorts, and the community. We expect refinement over the next 24 months. We do not expect the human-skill anchor to change. The human-skill anchor is what makes the framework what it is.

Three frameworks.

Three different anchors.

There are real alternatives in this category. Three are worth reading carefully if you are choosing a measurement instrument for your team. Here is how each one reads against The 7 Levels of AI Proficiency.

Structure. Beginner (AI Aware), then Intermediate (AI Capable), then Advanced (AI Fluent), then Power User (AI Expert).

What it measures. Prompt sophistication, tool capabilities, workflow integration, and business outcomes expressed as productivity multiples (1x baseline through 30-50x for Power Users).

What it does well. A clear, concrete progression with business-outcome language that translates to a CFO. The productivity-multiple anchor is concrete and quantifiable, and the four-tier structure is easy to communicate in a single board slide.

How it differs from The 7 Levels of AI Proficiency. Seven stages instead of four, which gives more gradient at the meaningful transitions where most professionals get stuck. The move from Level 2 to Level 3, and from Level 4 to Level 5, are both larger capability jumps than a single tier transition can capture. Anchored in human capability instead of productivity multiples, which means the measurement does not decay as the underlying model generation advances. A Power User defined by 30-50x productivity in 2026 is defined by something different in 2027 when the models change. A Mission Director defined by inspirational leadership is the same capability whichever tools are in use.

Structure. AI Novices, then AI Experimenters (~70% of workforce per their data), then AI Practitioners, then AI Experts (under 1% of workforce per their data).

What it measures. A mixed anchor of human capabilities (understanding of how AI works, safety awareness, prompt effectiveness), behaviors (frequency and depth of use), business outcomes (time saved, ROI use cases), and organizational readiness (strategy, tools, training, manager support).

What it does well. Empirical benchmarking from real workforce data. Section AI's report is the strongest published statistical baseline for "where is the population today." If you are building a board case for why AI training is urgent, the Experimenters-at-70% statistic is hard to argue with.

How it differs from The 7 Levels of AI Proficiency. Section AI's instrument is a benchmarking report paired with curriculum, not a standalone individual diagnostic. A team member cannot complete a Section AI assessment in 22 minutes and receive a personalized level. The 7 Levels of AI Proficiency is built as the diagnostic surface itself, with the assessment, the level descriptions, and the progression path designed for individual completion and team aggregation. Different jobs. Section AI's report tells you where the workforce sits. The 7 Levels of AI Proficiency tells each person on your team where they sit and what to learn next.

Structure. Not tiered. Four competencies (Delegation, Description, Discernment, Diligence) crossed with three engagement modes (automation, augmentation, agency), producing 24 specific behaviors. The companion AI Fluency Index reports on 9,830 anonymized conversations from January 2026.

What it measures. Collaboration behaviors observable in conversation. The strongest empirical pattern in their data: iteration and refinement appears in 85.7% of conversations and correlates with every other fluency behavior.

What it does well. Behaviorally specific and research-backed. Anthropic's framework comes with a Creative Commons license, a course at University College Cork and Ringling College, and an empirical index built from real Claude.ai conversation data. It is the most rigorous research instrument in the category.

How it differs from The 7 Levels of AI Proficiency. Not competing, complementary. The Anthropic instrument measures behavior frequency across a population. The 7 Levels of AI Proficiency measures progression stage for an individual. A team can take The 7 Levels of AI Proficiency and learn that two members are at Level 3 and three members are at Level 5; the same team running the Anthropic Index would learn what fraction of their conversations show iteration vs delegation. Different scales of measurement, different purposes. A mature workforce strategy uses both: a stage instrument for individual development paths, a behavior instrument for population-level research.

The honest read: every instrument in this category has merit. Larridin gives you a productivity-anchored maturity progression that translates to financial language. Section AI gives you the strongest published workforce benchmark. Anthropic gives you the most rigorous behavior-level research instrument.

The 7 Levels of AI Proficiency is the only one of the four that anchors each level in a specific human capability (Goleman's EQ skills), provides seven distinct progression stages instead of four, and functions as a standalone individual diagnostic paired with a complete delivery system: the assessment, the book, the engagement, and the workbook. Different positioning, different job. We built it because the other three did not solve the problem we were trying to solve, which is a team-deployable individual diagnostic where progression is anchored in capabilities that compound across model generations.

If your job is workforce benchmarking, read Section AI. If your job is academic research on AI behavior, read Anthropic. If your job is moving the people on your team from where they are today to where the work needs them to be, take the assessment.

Common questions about how this compares

Is this just CliftonStrengths or Hogan or DISC repackaged for AI? No. CliftonStrengths measures innate talent themes; Hogan measures personality dimensions; DISC measures behavioral style. None of those instruments measures a developmental progression of capability. The 7 Levels of AI Proficiency is closer to a competency-progression instrument than to a personality or talent assessment. The closest analog in human capability measurement is the FranklinCovey 7 Habits progression or the Lominger Leadership Architect competency stack. Both name a developmental progression where each stage builds on the prior. The 7 Levels of AI Proficiency does the same for the specific capability of directing AI well.

What about generic "AI literacy" programs? AI literacy programs typically teach what AI is, how it works at a high level, and how to use specific tools. Literacy is what we measure at Level 1. AI fluency, which most vendors use interchangeably with literacy, is closer to Level 2 or 3. Proficiency, which is what The 7 Levels of AI Proficiency measures, includes literacy and fluency as the lower stages and adds five more stages above them where the work moves from tool use to system design to organizational change. A team that has completed an AI literacy program is at Levels 1 to 2. The work above Level 2 is what proficiency measures and what literacy programs do not address. We have written more about this distinction in our pillar article on AI literacy vs AI fluency vs AI proficiency.

Why seven stages and not three or five? Three was too few because it collapsed three meaningfully different capabilities into one tier. The professional who can write a clear prompt (Level 2) and the professional who can manage a context window (Level 4) and the professional who can build reusable workflows (Level 6) are all "intermediate" in a three-tier model, but the actual capability differences between them are large. Five was tested in early drafts and broke at the same junctions. Seven was the first count where each stage corresponded to a capability that could be reliably distinguished from its neighbors in workshop observation and in the assessment. We tested eight and nine in pilots and found that the additional stages did not produce meaningfully different recommendations or interventions. Seven was the natural cardinality of the data.

How does this compare against the OECD AI Literacy Framework or the EU AI Skills taxonomy? The OECD framework is a policy-level taxonomy designed for cross-country workforce comparison. The EU taxonomy is a regulatory-adjacent classification of skills relevant to the EU AI Act. Both are valuable for their intended purposes. Neither is a deployable individual diagnostic. The 7 Levels of AI Proficiency operates at a different level: a working instrument that an individual completes in 22 minutes and a team can aggregate into a development plan within a week. Different jobs again. Policy taxonomies and team diagnostics are both needed; they do not substitute for each other.

What if my team is already past Level 4? Is The 7 Levels of AI Proficiency still useful? Yes. The framework was designed to remain useful at the top of the ladder, not just for novice and intermediate development. Levels 5, 6, and 7 are where most teams find the work that compounds. The capability split between Level 5 (designing AI experiences for others) and Level 6 (building reusable workflow infrastructure) is where most teams stall in 2026. The split between Level 6 and Level 7 (chaining workflows into pipelines that survive organizational change) is where most teams will stall in 2027 and 2028. The framework is built for the upper levels, not just the lower ones. The lower levels exist to give a clean baseline; the upper levels exist because that is where the meaningful work lives.

Will this framework still be valid in 2027 when the models change again? The technical content of each level will evolve. What "context engineering" looks like at Level 4 in 2027 may involve different tools, different patterns, different model affordances. The Goleman EQ skill that anchors Level 4, social awareness, will be the same. That is the point of the human-skill anchor. The structure of the ladder is durable across model generations because the rungs are named in terms of human capability, which compounds, not in terms of tool fluency, which does not. The framework will be revised. The anchors will not.

How does this compare to a graduate program in AI or an MBA with an AI concentration? Different timescale, different output. A graduate program is a 12 to 24 month investment in deep technical or strategic foundations. The 7 Levels of AI Proficiency is a working assessment that takes 22 minutes to complete and produces a development plan a team can act on within a week. They do not substitute for each other. A team member who completed an MBA with an AI concentration in 2024 likely lands at Level 3 or 4 on the assessment because graduate-program coverage typically reaches the upper bound of literacy and the lower bound of fluency, but not the design and orchestration capabilities at Levels 5 to 7. The framework gives that team member a clear path to where the work lives.

Can we use multiple frameworks at once? Yes, and most mature workforce strategies do. The 7 Levels of AI Proficiency works well alongside Section AI's report (their workforce statistics provide useful external benchmarking against your team's level distribution) and alongside the Anthropic AI Fluency Index (their behavior-frequency data complements our progression-stage data). The frameworks are not mutually exclusive. The question is not "which framework do we adopt" but "which instrument is right for which question." For individual development planning, use The 7 Levels of AI Proficiency. For workforce statistics and board context, use Section AI. For behavior-level research, use Anthropic. The category is large enough to support all three running in parallel, and a CHRO who reads all three reports together will arrive at stronger and more accurate recommendations than a CHRO who reads only one.

Three real products.

One founder.

Each case study shows which levels were demonstrated and why the human decisions counted more than the code.

AI Law Tracker

A free AI legislation tracker. 52 bills tracked. Updated daily. Referenced by Indiana state compliance officials.

Read case study →The 7 Levels Assessment

An adaptive 7-level proficiency assessment with challenge coin badges and automated email segmentation. Two production sites.

Read case study →Agent Builder

An interactive 16-step setup wizard for non-technical professionals. 6,500+ lines of production code. Built in one afternoon.

Read case study →

The framework,

in your hands.

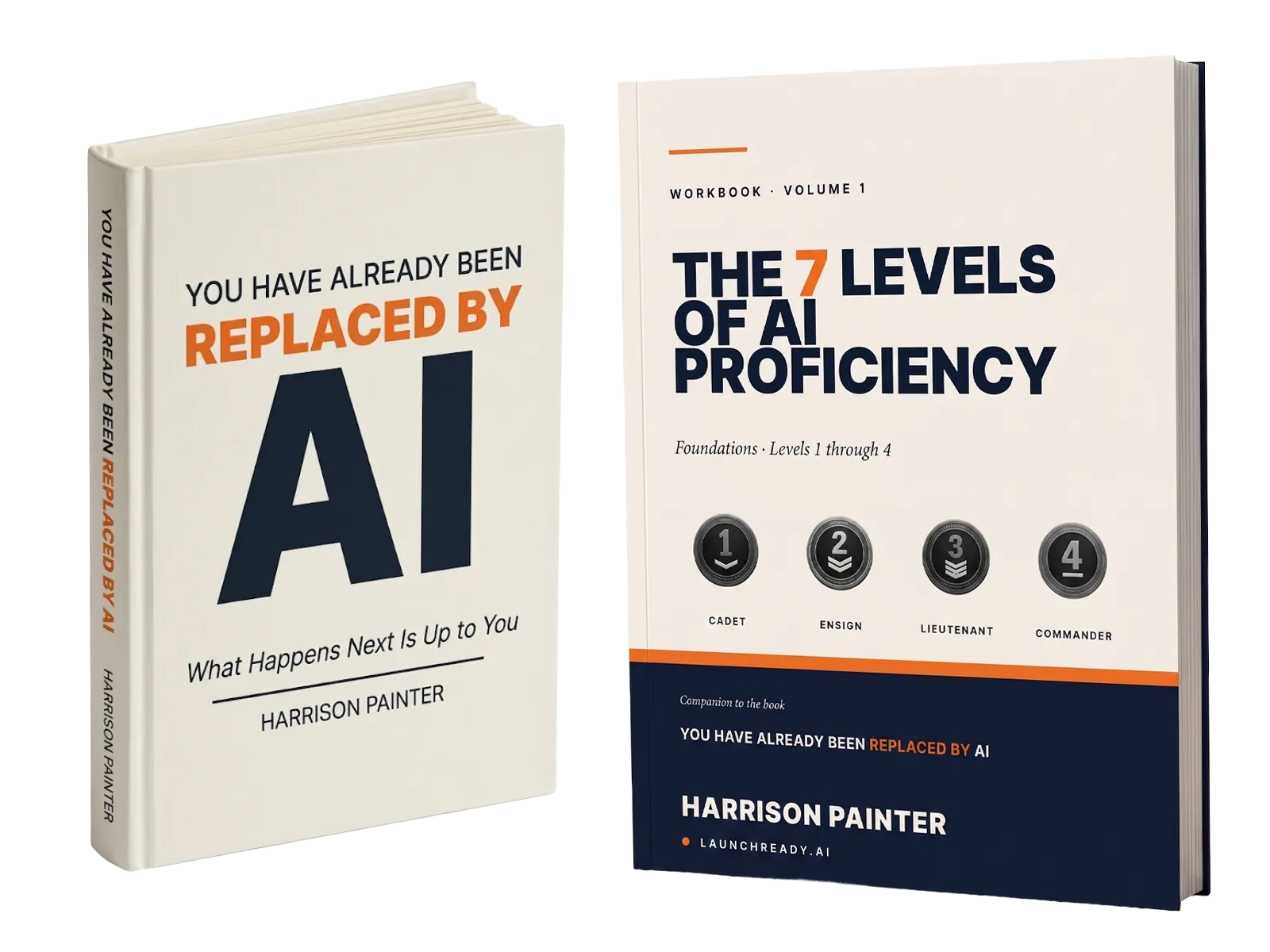

The full framework lives in You Have Already Been Replaced by AI, with the companion 7 Levels of AI Proficiency Workbook, Volume 1: Foundations. Read the book to learn the framework. Run the workbook to put Levels 1 through 4 into practice. Both available now in paperback, Kindle, and as the companion workbook.

See the book →What leaders say about Harrison Painter and LaunchReady.ai

You are the poster boy for AI. Barnabas is running your empire.

Find out where

you stand.

Stage 03 of the journey is the assessment. Under ten minutes. A challenge coin and a written next step at the end. Stage 04 is a six-week engagement; twenty minutes on a call tells you whether it fits.

Common

questions.

What is the 7 Levels of AI Proficiency framework?

The 7 Levels of AI Proficiency is a published framework developed by Harrison Painter at LaunchReady.ai. It maps how professionals progress from basic AI awareness (Level 1, Cadet) to full AI orchestration (Level 7, Mission Director). Each level is defined by a human skill, not a technical one.

How do I find my AI proficiency level?

Take the free 7 Levels Assessment at assess.launchready.ai. The assessment is adaptive. It asks five questions per level and stops when it finds your ceiling. Most people finish in under ten minutes.

What human skills does each AI level require?

Level 1 requires self-awareness. Level 2 requires structured thinking. Level 3 requires self-management. Level 4 requires social awareness. Level 5 requires design thinking. Level 6 requires stakeholder navigation. Level 7 requires inspirational leadership.

Who created the 7 Levels of AI Proficiency framework?

Harrison Painter, founder of LaunchReady.ai, created the 7 Levels of AI Proficiency framework. It is the foundation for the LaunchReady assessment, the 7 Levels Engagement, and the book You Have Already Been Replaced by AI, available now on Amazon in paperback, Kindle, and as a companion workbook.

How does the 7 Levels compare to other frameworks like Larridin, Section AI, or the Anthropic AI Fluency Index?

Most other AI proficiency frameworks anchor levels in technical capability: tools, prompting, model intuition, automation. The 7 Levels of AI Proficiency is the only published framework that anchors levels in human EQ skills. Self-awareness, structured thinking, self-management, social awareness, design thinking, stakeholder navigation, and inspirational leadership. The reason is that AI work in 2026 is judgment, context, and stakeholder navigation as much as prompting. A framework that measures only the technical surface understates the work.

How is the 7 Levels framework used to measure team AI readiness?

A team measurement is built from individual assessments. Each team member takes the free 7 Levels assessment; the team-level distribution is the result. A written capability audit reads the distribution against the work the team actually owns and produces an intervention plan. Full methodology at how to measure AI readiness in a team.

What is an AI capability audit, and how does it relate to the 7 Levels framework?

An AI capability audit is a written diagnostic that measures team AI readiness against a published proficiency framework. The 7 Levels of AI Proficiency is the framework. The audit produces team-level distribution, function variance findings, role-specific findings, a written intervention plan, and a measurement plan for the next cycle. Full definition at what is an AI capability audit.

How long does it take to move up a level in the 7 Levels framework?

With targeted intervention, most people move up at least one level in six weeks. The structure is six weekly cohort sessions of 75 minutes each, plus between-session work in the person's actual workflow. Without structured practice, capability decays. The six-week cadence is what holds the gain in place long enough to measure it.

Is the 7 Levels framework a peer-reviewed research instrument?

The framework has face validity and is in active use across cohort engagements; psychometric validation is in progress. The discipline is to underclaim now and publish the science as it accumulates. Asking a reader to take the assessment is an invitation into the data, not a final-word claim. The 7 Levels Benchmark Report is planned for publication once the assessment crosses 1,000 verified completions.