From market gap to live product

in 20 hours.

The Problem

AI regulation is accelerating. Federal and Indiana state bills could change how businesses use AI, hire with it, and store data through it. The only tools tracking this legislation are built for lawyers. Dense legal databases, paywalled analysis, no plain-English guidance.

Indiana business leaders who use AI, or whose teams do, had no resource designed for them. No way to understand what was coming, what it meant, or how exposed they were.

The Decision

I saw the gap on a Wednesday evening. By Friday night, the solution was live.

Not by hiring a developer. Not by commissioning an agency. I built it myself using Claude Code as the AI architect. Twenty hours of focused work across three days.

The question was not whether I could build it. The question was whether I could build something good enough to be the definitive resource for Indiana businesses navigating AI regulation.

Level 5 · Captain (Design Thinker)This case study references the 7 Levels of AI Proficiency, the framework developed by LaunchReady.ai that maps how professionals progress from basic AI use to full orchestration. Inline tags throughout show which level a specific decision represents. Take the free assessment at assess.launchready.ai to find your own level.

What I Built

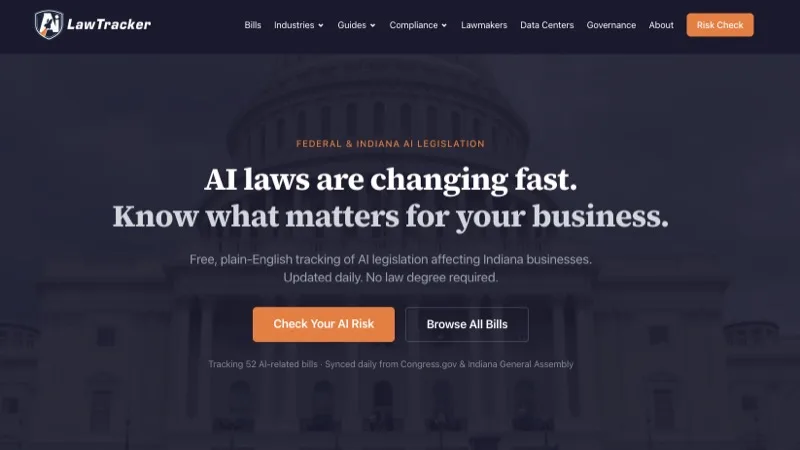

ailawtracker.org is a free AI legislation tracker designed for business leaders, not lawyers.

The core

Every active federal and Indiana AI bill, tracked with daily automated updates. Every bill gets a plain-English summary, a risk rating from 1 to 5, and industry tags. No legal jargon anywhere.

Level 4 · Commander (Context Engineer)The guides

Four compliance guides covering the full Indiana AI landscape, hiring obligations, a step-by-step compliance checklist, and the Illinois AI Act (which applies to Indiana employers who hire across state lines). 3,500+ words of original analysis on the pillar page alone.

The risk check

A 5-question assessment that gives any business a personalized AI compliance risk report in 2 minutes. This is the primary lead capture mechanism. The content is free. The personalized report requires an email.

Level 4 · Commander (Context Engineer)The directory

Lawmaker profiles with photos, bios, committee assignments, and AI stance classifications. Every Indiana state legislator and relevant federal representative.

The map

Active and proposed data center projects across Indiana on an interactive map. Investment amounts, job counts, and status for each.

The automation

Daily bill sync pulls new legislation from Congress.gov and Open States, runs it through AI classification, and updates the site. Weekly newsletters go out every Monday with the top five bill changes. Zero ongoing human effort.

Level 6 · Admiral (Systems Integrator)10,600+ lines of production code. 150+ dynamic pages. Five external API integrations.

How It Happened

Day 1: The Foundation (March 19, ~6 hours)

Started at 4:20 PM with an empty Next.js scaffold.

By 5:01 PM, the entire core application was committed. Bill tracking, data pipeline, AI-powered summaries, filtering, and search. I defined what the platform needed to do and who it was for. Claude designed the technical architecture to deliver it.

Level 4 · Commander (Context Engineer)The next decision was quality. The initial AI summaries used a cheaper model and read like Wikipedia entries. I evaluated the output, decided it was not good enough for the audience, and directed an upgrade to Claude Opus. The summaries got dramatically better.

Level 2 · Ensign (Prompt Engineer)Then the email system. I started with Kit's forms API, hit limitations, switched to their subscribers API. Built an automated weekly newsletter. Six iterations in an hour. On the sixth iteration, I caught a critical issue: the broadcast was set to send to my entire email list, not just AI Law Tracker subscribers. Fixed it before a single email went out.

Level 3 · Lieutenant (Critical Thinker)By 10:19 PM, the product was live, branded, and functional. Domain connected. Email system working. Design polished.

Days 2 and 3: Depth and Credibility (March 20-21, ~14 hours)

Day 2 added the About and Governance pages. Day 3 was where the product hit full scope.

Morning: four compliance guide pages with full SEO markup. Five industry-specific pages. The interactive risk assessment tool with bot protection.

Afternoon: the lawmaker directory (profiles with photos sourced from official directories). The data center map (projects plotted on an interactive map). And the accuracy audit.

The accuracy audit is the part that matters most.

Level 3 · Lieutenant (Critical Thinker)I directed a systematic review of every piece of AI-generated content on the site. Found and fixed:

- An incorrect statute citation (740 ILCS 180 corrected to 820 ILCS 42)

- A bill marked as "proposed" that was actually signed into law in August 2024

- Penalty language for the Video Interview Act that AI had fabricated

- Six federal legislator bios with hallucinated committee assignments

- Broken photo URLs from Congress.gov

Then I published all of it. The accuracy page at ailawtracker.org/accuracy shows the methodology, the audit log, and the known limitations. Transparency is the whole point.

Final blended accuracy score: ~90/100.

What 20 Hours Actually Looks Like

Twenty hours sounds fast. It is fast. It is not easy, and it is not passive.

AI did not build this while I watched. I made hundreds of decisions across those 20 hours. Every one of them required judgment that the AI could not provide on its own.

Research and strategic thinking (~4 hours)

Before a single line of code existed, I had to understand the problem. Who is the audience? What do they actually need? What exists already and why does it fail them? What would make someone bookmark this site and come back? I researched every existing legislation tracker. They were all built for lawyers. That gap defined every design decision that followed.

Product design and architecture (~3 hours)

What pages does this site need? What is the information hierarchy? How should bills be organized so a non-lawyer can find what matters to them? Where does the risk assessment fit in the user journey? What gets gated behind an email and what stays free? These are not technical questions. They are business questions. AI cannot answer them. I had to think through the experience from the user's perspective, not the builder's.

Content strategy and writing (~4 hours)

Four compliance guides do not write themselves. AI generated drafts. I rewrote sections, restructured arguments, cut jargon, and made sure every paragraph served the reader. The pillar guide alone went through multiple rounds of editing. The risk assessment questions had to be carefully designed to produce meaningful, personalized results without requiring a law degree to answer.

Quality control and accuracy auditing (~3 hours)

This is the part most people skip. AI-generated content contains errors. Period. I found fabricated penalty language, wrong statute citations, hallucinated committee assignments, and a bill marked as proposed that had already been signed into law. Every piece of AI-generated content on the site had to be verified. I published the methodology and the audit log because transparency is the only way to earn trust.

Design decisions and UX iteration (~3 hours)

Button hierarchy. Mobile layout. Filter behavior. Card design. Font choices. Color contrast. How the activity feed should work. Whether to auto-advance users through the risk assessment or let them control the pace. None of this is code. All of it determines whether someone uses the site or bounces in five seconds.

Integration and automation design (~3 hours)

Six email system iterations before it worked right. Catching the broadcast filter bug that would have emailed my entire subscriber list. Switching from Kit to Resend mid-build when the first tool hit limitations. Setting up daily sync automation and weekly newsletters. Real-time decisions about tools, architecture, and tradeoffs.

The AI wrote the code. The code only mattered because a human decided what to build, who to build it for, what quality bar to hold it to, and what mistakes to catch before they went live.

That is the difference between using AI and directing AI. Twenty hours of direction, not twenty hours of prompting.

What This Would Cost Traditionally

| Approach | Estimated cost | Timeline |

|---|---|---|

| Mid-level freelancer ($75/hr) | $6,000 to $10,000 | 3 to 6 weeks |

| US-based agency | $15,000 to $30,000 | 2 to 4 months |

| Harrison + Claude Code | ~$2-3 API cost | 20 hours across 3 days |

The freelancer estimate assumes 80 to 130 hours of work: data pipeline, AI integration, frontend, guide content, risk assessment, lawmaker directory, interactive map, email automation, SEO, design, and QA.

Total Anthropic API spend for the entire build: approximately two to three dollars. Monthly operating cost: six to thirty-six dollars depending on bill volume.

The Decisions That Shaped It

Indiana-first, not national

A national tracker would be shallow. An Indiana tracker could be the definitive resource. Depth over breadth.

Level 5 · Captain (Design Thinker)Plain English, not legal language

This is the core differentiator. Every summary is written for a business leader who needs to know what a bill means for them, not for a lawyer who needs to cite precedent.

Level 2 · Ensign (Prompt Engineer)Free content, gated assessment

The guides and bill data are completely free. The personalized risk report requires an email. Give value first. Capture leads through something worth exchanging an email for.

Accuracy audit before distribution

AI-generated content needs human verification. I found errors. I fixed them. I published the methodology. If the tool is going to be a trusted resource, trust has to be earned with transparency.

Level 3 · Lieutenant (Critical Thinker)Automate everything repeatable

The site updates itself. Bills sync daily. Newsletters send weekly. My ongoing time commitment is near zero. The 20-hour investment keeps compounding.

What This Means for You

This case study is not about building software. It is about speed to market.

I identified a gap on a Wednesday. By Friday, the gap was filled. Not with a prototype. With a production platform that updates itself, captures leads, and serves a real audience.

Any business leader could do this with the right skills. Not coding skills. Strategic skills. Knowing what to build, who it is for, and what "good enough" looks like. Directing AI rather than being impressed by it. Catching the mistakes it makes. Making the calls it cannot.

The AI wrote 10,600 lines of code. I made every decision that determined whether those lines were worth writing.

That is Level 6.

Direction, not coding. Strategy, not tools.

Frequently Asked

Is there a free AI legislation tracker?

Yes. ailawtracker.org tracks federal and Indiana AI bills with daily automated updates, plain-English summaries, risk ratings, and industry tags. It is free to use. The personalized risk assessment requires an email address.

How long does it take to build with Claude Code?

The AI Law Tracker was built in 20 hours across three days. That included research, architecture, 10,600 lines of code, 150 dynamic pages, four compliance guides, a lawmaker directory, an interactive map, and a risk assessment tool. The speed comes from directing AI rather than writing code manually.

What does AI compliance cost for businesses?

Traditional legislation trackers are built for lawyers and charge thousands per year. The AI Law Tracker was built for approximately two to three dollars in API costs and operates for six to thirty-six dollars per month depending on bill volume. It is free for all users.

The 7 Levels of AI Proficiency

A measurable ladder from where your team is today to where the work needs them to be. Each level is defined by a human skill, not a technical one.

Ready to build something like this?

Find your AI proficiency level, then book a discovery call.